Advanced Data Analysis Techniques: Unveiling the Power of Insights

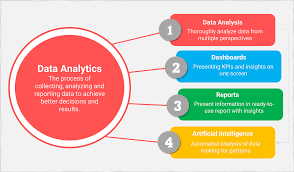

In today’s data-driven world, the ability to extract meaningful insights from vast amounts of information is a game-changer. With the advent of advanced data analysis techniques, businesses and researchers are now equipped with powerful tools to unravel hidden patterns, make informed decisions, and drive innovation.

Advanced data analysis techniques go beyond traditional methods of data examination. They involve sophisticated algorithms and statistical models that can handle complex datasets and uncover intricate relationships. Let’s explore some of these techniques and their applications:

- Machine Learning: This technique enables computers to learn from data without being explicitly programmed. By training models on historical data, machine learning algorithms can predict outcomes, classify information, or identify anomalies. From fraud detection in financial transactions to personalized recommendations in e-commerce, machine learning has revolutionized many industries.

- Natural Language Processing (NLP): NLP focuses on understanding human language and extracting meaning from textual data. It allows machines to comprehend written or spoken language, enabling applications such as sentiment analysis, chatbots, and language translation. NLP has transformed customer service by automating responses and providing real-time support.

- Time Series Analysis: This technique deals with analyzing data points collected over time to identify patterns or trends. Time series analysis is crucial for forecasting stock prices, predicting weather patterns, or monitoring equipment performance in industries like manufacturing or energy.

- Network Analysis: Network analysis examines relationships between entities in a network structure such as social media connections or transportation networks. It helps understand interactions between individuals, identify influential nodes, detect communities, and optimize network efficiency.

- Image Processing: Image processing techniques extract information from visual content such as photos or videos. Applications range from facial recognition for security purposes to medical imaging for diagnosing diseases.

These advanced data analysis techniques have far-reaching implications across various domains:

– Healthcare: Advanced analytics can analyze patient records to identify risk factors for diseases, optimize treatment plans, and improve patient outcomes.

– Finance: Predictive models can assess market trends, detect anomalies in transactions, and enhance risk management strategies.

– Marketing: Data analysis techniques enable marketers to segment customers based on behavior patterns, personalize marketing campaigns, and measure campaign effectiveness.

– Manufacturing: Advanced analytics can optimize supply chain operations, predict maintenance needs for machinery, and improve quality control processes.

The benefits of advanced data analysis techniques are not limited to large organizations or research institutions. With the rise of user-friendly tools and cloud computing platforms, even small businesses and individuals can leverage these techniques to gain insights from their data.

However, it is essential to remember that while advanced data analysis techniques provide powerful tools, they must be used responsibly. Ethical considerations such as privacy protection and bias mitigation should always be at the forefront of any data analysis endeavor.

In conclusion, advanced data analysis techniques offer immense potential for unlocking valuable insights from complex datasets. By harnessing the power of machine learning, NLP, time series analysis, network analysis, and image processing, businesses and researchers can make informed decisions that drive innovation and create a positive impact in our ever-evolving world.

8 Essential Tips for Advanced Data Analysis Techniques in English (UK)

- Define clear objectives

- Collect relevant and reliable data

- Cleanse and preprocess your data

- Explore different statistical techniques

- Utilize advanced visualization tools

- Apply machine learning algorithms

- Validate results through rigorous testing

- Communicate results effectively

Define clear objectives

Advanced Data Analysis Techniques: Tip 1 – Define Clear Objectives

When embarking on a journey of advanced data analysis, one of the most crucial steps is to define clear objectives. Without a clear understanding of what you hope to achieve, your efforts may become scattered and lack focus. Defining clear objectives sets the foundation for successful data analysis and ensures that your efforts are aligned with your desired outcomes.

Here are a few reasons why defining clear objectives is essential in advanced data analysis:

Focus and Direction: Clear objectives provide focus and direction to your data analysis efforts. They help you identify the specific questions you want to answer or the problems you want to solve. This clarity enables you to streamline your approach and allocate resources effectively.

Scope Management: Defining objectives helps manage the scope of your analysis. It prevents you from getting overwhelmed by vast amounts of data or going off track by exploring irrelevant avenues. By clearly outlining your goals, you can prioritize and concentrate on the most relevant aspects of your analysis.

Measurable Outcomes: Clear objectives allow for measurable outcomes. When you define specific goals, it becomes easier to determine whether you have achieved them or not. Measurable outcomes provide tangible evidence of success, enabling you to assess the effectiveness of your data analysis techniques.

Resource Optimization: By defining clear objectives, you can optimize the use of resources such as time, manpower, and technology. With a well-defined goal in mind, you can identify the necessary tools, skills, or expertise required for successful data analysis. This ensures that resources are allocated efficiently and eliminates wasteful efforts.

To define clear objectives for advanced data analysis:

Start with a Problem Statement: Clearly articulate the problem or question that needs addressing through data analysis. Be as specific as possible to avoid ambiguity.

Determine Key Metrics: Identify the key metrics or indicators that will help measure progress towards achieving your objective(s). These metrics should align with your problem statement and provide a quantifiable way to assess success.

Consider Stakeholder Needs: Understand the needs and expectations of your stakeholders, whether they are clients, colleagues, or decision-makers. Incorporate their requirements into your objective-setting process to ensure alignment and relevance.

Break Down Objectives: If your analysis involves multiple aspects or stages, break down your objectives into smaller, manageable tasks. This approach allows for better tracking of progress and helps avoid feeling overwhelmed.

Remember, clear objectives act as a guiding light throughout your data analysis journey. They provide clarity, focus, and a measurable framework for success. By defining clear objectives from the outset, you set yourself up for effective and impactful advanced data analysis.

Collect relevant and reliable data

Collecting Relevant and Reliable Data: The Foundation of Advanced Data Analysis Techniques

When it comes to advanced data analysis techniques, one crucial tip stands out among the rest: collecting relevant and reliable data. The success of any data analysis project heavily relies on the quality and suitability of the data being analyzed. Without accurate and meaningful data, even the most sophisticated analysis techniques will yield limited or misleading insights.

Relevance is key when selecting the data to be analyzed. It is important to identify what specific information is needed to answer the research question or address the business problem at hand. Collecting vast amounts of irrelevant data not only wastes time and resources but also increases the complexity of analysis without providing any meaningful insights.

To ensure relevance, it is essential to clearly define the objectives of the analysis and identify the specific variables or metrics that align with those objectives. This helps in determining what type of data needs to be collected and from which sources. By focusing on collecting targeted and purposeful data, analysts can streamline their efforts and obtain more accurate results.

Equally important is ensuring that the collected data is reliable. Reliable data refers to information that is accurate, consistent, complete, and free from bias or errors. Inaccurate or inconsistent data can lead to flawed conclusions and misguided decision-making.

To ensure reliability, it is crucial to establish robust data collection processes. This includes using standardized measurement techniques, implementing rigorous quality control measures, and validating the accuracy of collected data through cross-referencing with independent sources whenever possible.

Moreover, paying attention to potential biases in data collection is essential. Biases can arise from various sources such as sampling methods, survey design, or human error during manual entry. Being aware of these biases allows analysts to take appropriate corrective measures or consider them when interpreting results.

By prioritizing relevance and reliability in data collection efforts, analysts set a solid foundation for advanced data analysis techniques. They ensure that their findings are based on trustworthy information that can drive informed decision-making, unlock valuable insights, and fuel innovation.

In conclusion, collecting relevant and reliable data is a fundamental tip for successful advanced data analysis. It ensures that the analysis is focused, accurate, and free from biases. By adhering to this principle, analysts can maximize the potential of advanced techniques and derive meaningful insights that have a tangible impact on businesses, research endeavors, and society as a whole.

Cleanse and preprocess your data

Cleanse and Preprocess Your Data: The Foundation of Effective Analysis

When it comes to advanced data analysis techniques, one crucial step often overlooked is the cleansing and preprocessing of data. Before diving into complex algorithms and models, it is essential to ensure that the data you are working with is accurate, consistent, and ready for analysis. This process sets the foundation for effective analysis and reliable insights.

Data cleansing involves identifying and rectifying any errors, inconsistencies, or missing values within your dataset. It ensures that your data is reliable and free from any anomalies that could skew your results. By cleaning your data, you eliminate potential biases and increase the accuracy of your analyses.

Preprocessing goes hand in hand with data cleansing. It involves transforming raw data into a format suitable for analysis. This includes tasks such as removing duplicates, handling missing values, normalizing variables, or encoding categorical variables into numerical representations. Preprocessing prepares your data for advanced techniques by ensuring it meets the assumptions and requirements of specific algorithms.

Why is cleansing and preprocessing so important?

- Improved Accuracy: By eliminating errors and inconsistencies in your dataset, you can be confident that your analysis will yield accurate results. Cleaned data reduces the risk of making incorrect assumptions or drawing faulty conclusions.

- Enhanced Efficiency: Data cleansing reduces processing time by removing unnecessary or redundant information. Preprocessing streamlines your dataset to focus on relevant variables, saving computational resources and improving efficiency.

- Reliable Insights: Cleaned and preprocessed data provides a solid foundation for advanced techniques such as machine learning or statistical modeling. By ensuring the quality of your data upfront, you can trust the insights derived from these techniques to make informed decisions.

- Avoiding Bias: Data cleansing helps address biases that may exist within your dataset due to collection methods or human error. By rectifying these biases before analysis, you can avoid skewed outcomes that may lead to misguided conclusions.

- Future-Proofing: Cleansing and preprocessing your data is not a one-time task. As new data becomes available, it is crucial to repeat this process to maintain the integrity of your analyses. This ensures that your insights remain relevant and accurate over time.

In conclusion, cleansing and preprocessing your data are fundamental steps in advanced data analysis techniques. By investing time and effort into these processes, you set the stage for accurate, reliable, and insightful analyses. Remember, the quality of your data directly impacts the quality of your results. So, take the time to cleanse and preprocess your data – it’s an investment that pays off in the long run.

Explore different statistical techniques

Explore Different Statistical Techniques: Unlocking the Full Potential of Data Analysis

When it comes to advanced data analysis techniques, one tip stands out: explore different statistical techniques. In the world of data analysis, there is no one-size-fits-all approach. Each statistical technique offers unique insights into your data, allowing you to uncover hidden patterns and make more informed decisions.

Statistical techniques provide a framework for understanding and interpreting data. By employing a variety of techniques, you can gain a comprehensive understanding of your data from different perspectives. Here are a few statistical techniques worth exploring:

- Descriptive Statistics: This technique focuses on summarizing and describing the main characteristics of a dataset. Measures such as mean, median, and standard deviation provide insights into the central tendency and variability of your data.

- Inferential Statistics: Inferential statistics allows you to draw conclusions about a population based on a sample. By analyzing sample data, you can make inferences or predictions about larger populations with a certain level of confidence.

- Hypothesis Testing: Hypothesis testing helps determine whether there is enough evidence to support or reject a specific claim about your data. It allows you to test assumptions and make statistically sound decisions.

- Regression Analysis: Regression analysis examines the relationship between dependent and independent variables. It helps understand how changes in one variable affect another, enabling prediction or forecasting.

- Cluster Analysis: Cluster analysis groups similar objects together based on defined characteristics or similarities within the dataset. It aids in identifying distinct patterns or segments within your data.

- Factor Analysis: Factor analysis explores underlying factors that explain observed correlations among variables. It helps reduce complex datasets into more manageable dimensions while maintaining meaningful information.

- Time Series Analysis: Time series analysis focuses on analyzing data collected over time to identify trends, seasonality, or forecasting future values.

By exploring different statistical techniques, you can gain deeper insights into your data and extract valuable knowledge that may have otherwise remained hidden. Each technique offers a unique perspective and can uncover different aspects of your dataset.

Remember, the choice of statistical technique depends on the nature of your data, research objectives, and the questions you seek to answer. It’s essential to understand the strengths and limitations of each technique and select the most appropriate one for your specific analysis.

In conclusion, exploring different statistical techniques is a valuable tip when engaging in advanced data analysis. By embracing a diverse range of techniques such as descriptive statistics, inferential statistics, hypothesis testing, regression analysis, cluster analysis, factor analysis, and time series analysis, you can unlock the full potential of your data and gain comprehensive insights that drive meaningful outcomes.

Utilize advanced visualization tools

Utilize Advanced Visualization Tools: Unleashing the Power of Data Insights

In the realm of advanced data analysis techniques, one tip stands out as a game-changer: utilizing advanced visualization tools. These tools have the ability to transform complex datasets into visually appealing and easily understandable representations, enabling us to uncover hidden patterns, trends, and insights that might otherwise go unnoticed.

Data visualization is not a new concept. From simple bar charts to line graphs, visual representations have long been used to present data in a more accessible format. However, with advancements in technology and the availability of robust visualization software, we now have access to an array of powerful tools that take data visualization to a whole new level.

By leveraging advanced visualization tools, we can bring data to life in dynamic and interactive ways. Here’s why this tip is essential:

- Enhanced Understanding: Visualizing data allows us to perceive information more intuitively. Complex relationships and trends become apparent at a glance, enabling faster comprehension and deeper insights. With interactive features like zooming or filtering, we can delve into specific aspects of the data for a more detailed analysis.

- Improved Communication: Visualizations are an effective means of communicating complex information to diverse audiences. By presenting data in visually compelling formats such as charts, graphs, maps, or infographics, we can convey key messages clearly and engage stakeholders more effectively. Whether it’s presenting research findings or making business decisions based on data-driven insights, visualizations facilitate effective communication.

- Exploration and Discovery: Advanced visualization tools empower users to explore datasets from multiple angles and perspectives. By manipulating variables or changing parameters on-the-fly, we can uncover new patterns or relationships that were previously hidden. This exploratory approach fuels innovation and opens up new avenues for research and problem-solving.

- Storytelling with Data: Visualizations enable us to tell compelling stories with our data. By combining different visual elements like color coding or animation, we can guide viewers through a narrative, highlighting important trends or outliers. This storytelling aspect adds context and meaning to the data, making it more relatable and memorable.

When it comes to advanced visualization tools, there is a wide range of options available. From industry-standard software like Tableau or Power BI to open-source libraries like D3.js and Plotly, the choice depends on specific needs and expertise. Regardless of the tool chosen, the key lies in leveraging its capabilities to transform data into visually captivating representations.

In conclusion, utilizing advanced visualization tools is a crucial tip for harnessing the power of data analysis techniques. By adopting these tools, we can enhance our understanding of complex datasets, improve communication with stakeholders, facilitate exploration and discovery, and tell compelling stories with our data. So let’s embrace these tools and unlock the full potential of our data-driven insights.

Apply machine learning algorithms

Unlocking Insights: The Power of Applying Machine Learning Algorithms in Data Analysis

In the realm of advanced data analysis techniques, one approach stands out as a game-changer: applying machine learning algorithms. With their ability to learn from data and make predictions or classifications, machine learning algorithms have revolutionized the way businesses and researchers analyze vast amounts of information.

Machine learning algorithms are designed to automatically identify patterns, relationships, and trends within complex datasets. By training these algorithms on historical data, they can uncover hidden insights and provide valuable predictions for future outcomes. This capability has opened up endless possibilities across various industries and domains.

One of the key advantages of applying machine learning algorithms is their adaptability to different types of data. Whether it’s structured data like numerical values or unstructured data like text or images, machine learning algorithms can handle diverse datasets with ease. This flexibility allows businesses to extract insights from a wide range of sources and make informed decisions based on comprehensive analyses.

The applications of machine learning in data analysis are vast:

– Predictive Analytics: By utilizing historical data, machine learning algorithms can predict future trends or outcomes with remarkable accuracy. From forecasting sales figures to anticipating customer behavior, predictive analytics powered by machine learning enables businesses to stay ahead of the curve.

– Anomaly Detection: Machine learning algorithms excel at identifying unusual patterns or outliers within datasets. This capability is particularly valuable in fraud detection, cybersecurity, and quality control processes where anomalies can have significant implications.

– Personalization: Machine learning enables businesses to personalize their offerings based on individual preferences and behavior patterns. From tailored product recommendations to customized marketing campaigns, personalization powered by machine learning enhances customer satisfaction and drives engagement.

– Natural Language Processing: Machine learning algorithms can understand and analyze human language through natural language processing techniques. This capability opens doors for sentiment analysis, chatbots for customer support, language translation services, and more.

To apply machine learning algorithms effectively in data analysis, it is crucial to have a robust understanding of the underlying concepts and methodologies. Data preprocessing, feature engineering, model selection, and evaluation are all essential steps in the process. Additionally, having access to quality data and ensuring its integrity is vital for accurate results.

As machine learning continues to advance rapidly, it is becoming more accessible to businesses of all sizes. With cloud-based platforms and user-friendly tools available, organizations can leverage machine learning algorithms without extensive technical expertise. This democratization of machine learning empowers businesses to harness the power of data analysis and gain a competitive edge.

However, it’s important to note that applying machine learning algorithms requires careful consideration of ethical implications. Ensuring privacy protection, transparency in decision-making processes, and addressing potential biases are critical aspects that must be addressed throughout the analysis.

In conclusion, applying machine learning algorithms has transformed the landscape of data analysis. By leveraging their ability to learn from data and make predictions or classifications, businesses can unlock valuable insights, drive innovation, and make informed decisions in an increasingly data-driven world. Embracing this powerful technique opens up new possibilities for growth and success across industries.

Validate results through rigorous testing

Validate Results Through Rigorous Testing: Ensuring the Accuracy of Advanced Data Analysis Techniques

When it comes to advanced data analysis techniques, one crucial aspect that cannot be overlooked is the validation of results. In a rapidly evolving field where decisions are made based on data-driven insights, it is paramount to ensure the accuracy and reliability of the analysis.

Rigorous testing serves as a vital step in the validation process. It involves subjecting the data analysis techniques to various tests and scenarios to assess their performance and verify the credibility of the results. Let’s delve into why rigorous testing is essential and how it can benefit businesses and researchers:

- Detecting Errors: No matter how advanced or sophisticated an algorithm or model may be, errors can still occur. Rigorous testing helps identify potential flaws in the analysis process, whether they stem from faulty assumptions, inadequate data quality, or algorithmic biases. By uncovering these errors early on, corrective actions can be taken to improve the accuracy of the results.

- Assessing Robustness: Robustness refers to how well an analysis technique performs across different datasets or conditions. Rigorous testing allows researchers to evaluate whether their chosen technique holds up consistently under various scenarios. This assessment ensures that the technique is not overly dependent on specific datasets or biased towards particular conditions, thus enhancing its generalizability.

- Comparing Performance: Rigorous testing enables researchers to compare different analysis techniques against each other objectively. By employing benchmark datasets or simulated scenarios, they can evaluate which technique produces more accurate results or better aligns with their specific objectives. This comparison helps in selecting the most suitable technique for a given task.

- Building Trust: In any data-driven decision-making process, trust in the results is paramount. Rigorous testing instills confidence by providing evidence that supports the validity of the analysis techniques used. This trust becomes crucial when making critical business decisions or when research findings have far-reaching implications.

To ensure the effectiveness of rigorous testing, several best practices should be followed:

– Use diverse datasets: Testing should involve a variety of datasets that represent the real-world scenarios the analysis technique is intended to address. This diversity helps uncover any limitations or biases that may exist.

– Employ cross-validation techniques: Cross-validation involves splitting the data into multiple subsets and testing the analysis technique on different combinations. This approach helps assess the stability and consistency of the results.

– Collaborate and seek peer review: Engaging with other experts in the field and seeking peer review can provide valuable insights and constructive criticism. This external validation ensures that blind spots are identified and addressed.

In conclusion, validating results through rigorous testing is a critical step in advanced data analysis techniques. By subjecting these techniques to thorough examination, errors can be detected, robustness can be assessed, performance can be compared, and trust can be established. Embracing this practice ensures that businesses make informed decisions based on reliable insights while researchers contribute to scientific knowledge with confidence.

Communicate results effectively

Communicate Results Effectively: Unlocking the Value of Advanced Data Analysis Techniques

In the realm of advanced data analysis techniques, uncovering valuable insights is only half the battle. The true power lies in effectively communicating those results to stakeholders, decision-makers, and the broader audience. Without clear and concise communication, even the most groundbreaking findings may go unnoticed or fail to drive meaningful action.

When it comes to conveying complex data analysis outcomes, here are some key considerations for effective communication:

- Know Your Audience: Tailoring your message to suit your audience is paramount. Understand their level of technical expertise and adapt your communication style accordingly. Use plain language, avoid jargon, and focus on conveying the most relevant insights that align with their interests or goals.

- Visualize Data: Visual representations can be a powerful tool for conveying information quickly and intuitively. Utilize charts, graphs, infographics, or interactive dashboards to present key findings in a visually appealing manner. Clear visualizations help stakeholders grasp complex concepts more easily and facilitate better comprehension of the results.

- Tell a Story: Weaving a narrative around your data analysis results can make them more engaging and memorable. Presenting findings within a compelling storyline helps stakeholders understand the context, significance, and implications of the insights gained. Connect the dots between data points to create a coherent narrative that resonates with your audience.

- Provide Context: Data analysis outcomes should never exist in isolation; they need to be contextualized within broader business objectives or research goals. Explain how your findings relate to existing knowledge or industry trends. Highlight how they address specific challenges or opportunities faced by your organization or field of study.

- Be Transparent about Limitations: No data analysis technique is perfect, and it’s crucial to acknowledge and communicate any limitations or uncertainties associated with your findings. Discuss potential biases in data collection or model assumptions that may impact the results’ accuracy. Transparently addressing limitations builds trust and ensures a more nuanced understanding of the insights.

- Use Real-World Examples: Whenever possible, illustrate your findings with real-world examples or case studies. Concrete examples help stakeholders relate to the results and visualize how they can be applied in practical situations. By showcasing tangible benefits or potential impact, you can inspire action and foster enthusiasm for data-driven decision-making.

- Encourage Dialogue: Communication should be a two-way process. Encourage questions, feedback, and discussion from your audience. This fosters a collaborative environment where stakeholders can provide valuable insights or raise concerns that may influence the interpretation or further analysis of the data.

In conclusion, effective communication of advanced data analysis results is essential to unlock their true value. By tailoring your message to the audience, utilizing visualizations, storytelling, providing context, being transparent about limitations, using real-world examples, and encouraging dialogue, you can ensure that your insights are understood, appreciated, and acted upon. Remember: it’s not just about what you discover; it’s about how effectively you communicate it to make an impact.

No Responses